Thus this algorithm will not generally perform well in high-dimensional data. Random Forest is a Bagging technique, so. How many of the covariates/features you sample out of all covariates in the data set is a tuning parameter of the algorithm. Random Forest is a Supervised learning algorithm that is based on the ensemble learning method and many Decision Trees. If you only had 1 covariate/feature you would select that feature with probability 1. The basic idea is to take a bunch of subsets of the dataset. They derive their strength from two aspects: using random subsamples of. Bagging is an ensemble method that uses a technique called bootstrap aggregation (hence bagging). The post focuses on how the algorithm works and how to use it for predictive modeling problems. Random forests are one of the best performing methods for constructing ensembles. This post was written for developers and assumes no background in statistics or mathematics. So if you had 100 covariates you would select a subset of these features each have selection probability 0.01. The Random Forest algorithm that makes a small tweak to Bagging and results in a very powerful classifier. The selection of each covariate is done with uniform probability in the original bootstrap paper. This is especially the case if one or two variables have.

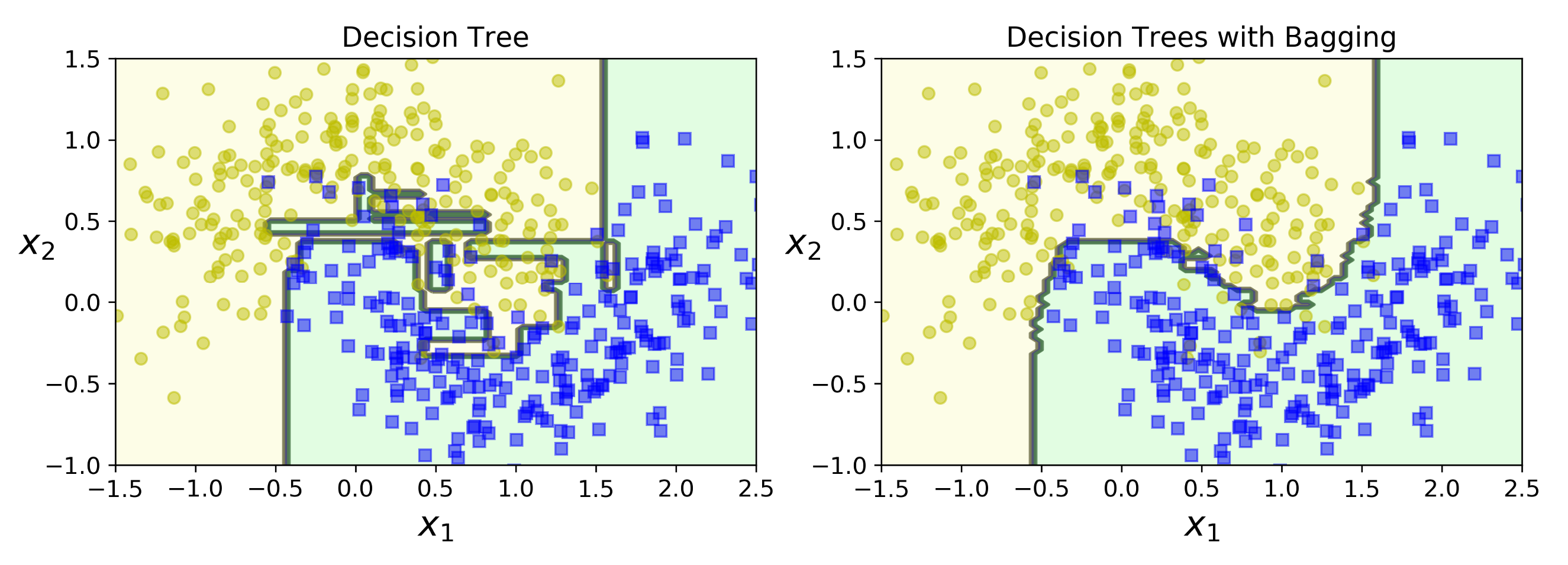

Random forest creates bootstrap samples and across observations and for each fitted decision tree a random subsample of the covariates/features/columns are used in the fitting process. A notable limitation of bagging is that the models fit to the trees will tend to be highly correlated.

This is done as a step within the Random forest model algorithm. The Bagging method randomly draws multiple training samples from the original. What is Ensemble Learning Ensemble Learning, Ensemble model, Boosting, Stacking, Bagging Random Forest Random Forest for Regression and Classification, algorithm, advantages and disadvantages, Random Forest vs. In general if you take a bunch of bootstrapped samples of your original dataset, fit models $M_1, M_2, \dots, M_b$ and then average all $b$ model predictions this is bootstrap aggregation i.e. On the other hand, random forest is a bagging method as well however, in addition to drawing random samples from our training set with replacement, we may also. Random forest is an ensemble learning model using the Bagging 12 method.

Bagging in general is an acronym like work that is a portmanteau of Bootstrap and aggregation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed